Dev Log 2018-05-05¶

Entity graph tests are partially passing, but there are issues at non-square resolutions and with certain entity origins. I know the model/view/projection matrix calculations aren’t quite right, so that’s the place to start investigating. It’s on the back burner while I work through fundamental design for the OpenGL renderer.

The new renderer is already able to draw quite a bit of what the SDL renderer does. This includes textured entities with local transformations, debug OOBB and AABB, and single-line text strings.

Text rendering is more complex with OpenGL than SDL. The SDL_ttf extension provides a convenient TTF string to texture functionality. For OpenGL, I use FreeType to load the data for the desired string and font. FreeType provides the information required to determine the dimensions of the texture on which to draw the text, as well as the information required to place the bitmaps for the individual characters vertically and horizontally on that texture.

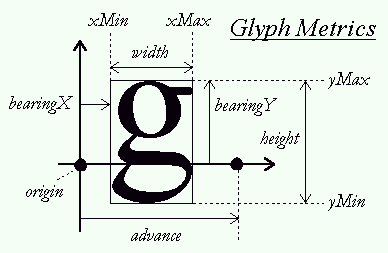

FreeType horizontal glyph metrics¶

FreeType provides the bearing, which is the distance between the top of a glyph and the baseline of a line of text. Everything is relative to the baseline. For a one line texture, the total height needs to be at least the sum of the largest distance between the baseline and the top of a glyph and the largest distance between the baseline and bottom of a glyph, for all the glyphs used in the string. I calculate the texture height, then find the texture y offset for the baseline. Finally, I position each letter relative to the baseline.

I draw characters to a texture and update vertex data in the nui rendering pass, then ng renders those entities with the textures in the usual way.

The next steps for text are to make sure different types and styles of fonts are working, and then to support multi-line strings. This should be straightforward. FreeType font data provides the info to calculate baseline to baseline distance, so I should be able to extend the above algorithm to create a texture for multiple lines of text.

The OpenGL renderer currently allocates a VAO and multiple VBOs for each instance of an entity. This is not the efficient way to do things, but it was the shortest path from OpenGL tutorials to desired functionality. Normal textured entities have a VAO, a VBO for the model vertices, a VBO for UV coordinates, and a VBO for AABB vertices. Text entities have a VAO and one shared VBO for both model vertices and UV coordinates, since I currently use a static depth for text (tutorial stuff) and send both model vertices and UV coordinates to the shader using one vec4 vertex attribute. There are two different rendering paths to handle the different setups. Those will be consolidated as I work through the use cases and develop a better understanding of OpenGL state and the relationships between VAO, VBO, and shaders.